Top 13 Small Language Models (SLMs) for 2025 - Analytics Vidhya

Mar 15, 2025 am 09:53 AMThis year, compact language models (CLMs) like OpenAI's o1 have captured significant attention, demonstrating impressive natural language processing capabilities. However, many applications don't require the immense resources of larger models. Enter small language models (SLMs) – efficient, streamlined solutions ideal for budget-conscious applications and limited computational environments.

SLMs balance performance and efficiency. Optimized architecture and size make them perfect for edge devices, resource-constrained systems, and applications needing rapid inference. From powering mobile apps to providing offline NLP functionality, these models are democratizing advanced language technologies.

This blog explores 13 top-performing SLMs. Whether you're a developer seeking lightweight solutions or a researcher investigating efficient NLP, this list showcases that smaller can be better. Let's explore how these compact models are making a significant impact.

Table of Contents

- Versatile Multi-Task Performance (Translation, Summarization, Q&A)

- T5

- Qwen-2

- Llama 3.2

- Mistral Nemo

- Mistral Small 3

- Reasoning-Focused Tasks

- o3-mini

- Phi-4

- Text Generation

- DistilGPT-2

- SmolLM

- General NLU (Text Classification, Sentiment Analysis, Named Entity Recognition)

- MiniLM

- MobileBERT

- Microsoft Phi 3.5 Mini

- Gemma 2

- TinyBERT

- DistilBERT

- Frequently Asked Questions

For a deeper dive into SLMs, see: What are Small Language Models (SLMs)? Now, let's examine these 13 leading SLMs.

Versatile Multi-Task Performance (Translation, Summarization, Q&A)

T5

Google Research's T5 (Text-To-Text Transfer Transformer) is a versatile model using a unified text-to-text framework for various NLP tasks (translation, summarization, Q&A).

Parameter Size

T5 offers various sizes, from T5-Small (60 million parameters) to T5-11B (11 billion parameters), catering to diverse resource needs.

Architecture

T5's Transformer architecture uses encoder and decoder components, emphasizing flexibility by framing all tasks as text-to-text problems. Pre-training on a large dataset enhances its understanding.

Availability

T5 is open-source (Apache 2.0 license), accessible via TensorFlow and Hugging Face.

Qwen-2

Qwen-2 is an efficient CLM excelling in text generation, classification, and summarization, suitable for various applications. Its modular design is ideal for constrained hardware.

Parameter Size

Qwen-2 comes in 3 billion, 7 billion, and 13 billion parameter versions, offering scalability for different applications.

Architecture

Qwen-2's advanced Transformer architecture uses techniques like rotary positional embeddings and adaptive pre-normalization for speed and stability. Its modularity ensures adaptability.

Availability

Qwen-2 is open-source, with some advanced features available via subscription.

Llama 3.2

Llama 3.2 prioritizes high performance with resource efficiency, making it suitable for applications with lower computational overhead.

Parameter Size

Llama 3.2 offers versions ranging from 1.3 billion to 13 billion parameters, allowing users to choose based on their needs.

Architecture

Llama 3.2 uses Grouped Query Attention, Rotary Positional Embedding (RoPE), and SwiGLU activations for efficiency and performance.

Availability

Llama 3.2 is open-source, with a free tier and paid options for extended features and support.

Mistral Nemo

Mistral Nemo is a compact and efficient CLM designed for high-quality language understanding and generation, emphasizing performance and ease of integration.

Parameter Size

Mistral Nemo is available in 1.3 billion, 7 billion, and 13 billion parameter versions.

Architecture

Mistral Nemo's transformer-based architecture uses optimized attention mechanisms and enhanced token embeddings for efficient memory usage and throughput.

Availability

Mistral Nemo is open-source.

Mistral Small 3

Mistral Small 3 handles approximately 80% of generative AI tasks with modest hardware requirements.

Parameter Size

Mistral Small 3 has 24 billion parameters, offering performance comparable to much larger models. It's deployable on a single high-end GPU or a powerful laptop.

Architecture

Mistral Small 3 uses fewer layers than competing models for low-latency performance. It's available in pre-trained and instruction-tuned versions.

Availability

Mistral Small 3 is open-source (Apache 2.0 license), available on Hugging Face, Ollama, and Kaggle.

Reasoning-Focused Tasks

o3-mini

o3-mini is a compact model achieving high performance despite its reduced parameter count, making it suitable for resource-constrained devices.

Parameter Size

o3-mini's significantly reduced parameter count allows efficient operation on devices with limited resources.

Architecture

As part of OpenAI's reasoning model series, o3-mini supports text input/output and adjustable reasoning levels.

Availability

o3-mini is accessible via ChatGPT, OpenAI API, Microsoft Azure OpenAI Service, and Open Router.

Phi-4

Microsoft's Phi-4 (14 billion parameters) excels in reasoning tasks while maintaining computational efficiency.

Parameter Size

Phi-4's 14 billion parameters are optimized for reasoning efficiency and reduced computational demands.

Architecture and Training

Phi-4's architecture and training process, including synthetic data generation and refinement techniques, enhance its reasoning capabilities.

Availability

Phi-4 is currently proprietary.

Text Generation

DistilGPT-2

DistilGPT-2 is a smaller, more efficient version of GPT-2, retaining most of its capabilities while significantly reducing its size.

Parameter Size

DistilGPT-2 typically has around 82 million parameters, a significant reduction from GPT-2.

Architecture

DistilGPT-2 uses a similar Transformer architecture to GPT-2 but with fewer layers, achieved through knowledge distillation.

Availability

DistilGPT-2 is open-source (Hugging Face).

SmolLM

SmolLM is a lightweight model designed for efficient NLP with a reduced computational footprint.

Parameter Size

SmolLM offers various sizes, from 10 million to 300 million parameters.

Architecture

SmolLM uses transformer-based designs with pruning, quantization, and adaptive computation methods for efficiency.

Availability

SmolLM is open-source, with a free tier and paid options.

General NLU (Text Classification, Sentiment Analysis, Named Entity Recognition)

MiniLM

Microsoft's MiniLM is a compact and efficient model using knowledge distillation techniques.

Parameter Size

MiniLM offers various sizes, from 22 million to 384 million parameters.

Architecture

MiniLM uses a deep self-attention mechanism, incorporating knowledge distillation to transfer performance from a larger model.

Availability

MiniLM is open-source (Hugging Face, GitHub).

MobileBERT

MobileBERT is a lightweight adaptation of BERT, designed for resource-constrained devices.

Parameter Size

MobileBERT has approximately 25 million parameters.

Architecture

MobileBERT uses a bottleneck structure, inverted bottleneck layers, and a quadruple feed-forward network for efficiency.

Availability

MobileBERT is open-source.

Microsoft Phi 3.5 Mini

Microsoft Phi 3.5 Mini balances efficiency and performance for robust natural language understanding with limited resources.

Parameter Size

Phi 3.5 Mini comes in 1.3 billion and 3 billion parameter versions.

Architecture

Phi 3.5 Mini's Transformer architecture uses optimized attention mechanisms for efficiency.

Availability

Microsoft Phi 3.5 Mini is proprietary, integrated into Microsoft Azure AI services (free and paid tiers).

Gemma 2

Gemma 2 is designed for efficient NLU and generation tasks, balancing accuracy and speed.

Parameter Size

Gemma 2 offers versions with 125 million, 350 million, and 1.2 billion parameters.

Architecture

Gemma 2 uses a streamlined transformer architecture with dynamic attention heads and layer normalization enhancements.

Availability

Gemma 2 is open-source (permissive license), with free and premium options.

TinyBERT

TinyBERT is a distilled version of BERT, reducing computational complexity and memory footprint.

Parameter Size

TinyBERT's smallest version has around 14 million parameters, while a larger version has about 66 million.

Architecture

TinyBERT uses a similar Transformer architecture to BERT but with fewer layers and reduced dimensions.

Availability

TinyBERT is open-source (Apache License 2.0), accessible via Hugging Face Transformers.

DistilBERT

DistilBERT is a smaller, faster, and lighter version of BERT, retaining most of BERT's performance.

Parameter Size

DistilBERT has approximately 66 million parameters.

Architecture

DistilBERT simplifies BERT's architecture by reducing the number of layers and employing knowledge distillation.

Availability

DistilBERT is open-source (Hugging Face Transformers).

Conclusion

SLMs are revolutionizing NLP by offering a balance of performance, efficiency, and accessibility. Their suitability for resource-constrained environments makes them ideal for various applications. Open-source and proprietary models alike are driving innovation and expanding access to advanced language technologies. As AI adoption grows, SLMs will be crucial for scaling NLP efficiently and inclusively.

Frequently Asked Questions

Q1. Can small language models be used offline? A. Yes, their lightweight nature allows offline deployment on various devices.

Q2. How are small language models fine-tuned? A. Fine-tuning adapts a pre-trained model to a specific task using a smaller dataset.

Q3. Are small language models secure and private? A. Local deployment can enhance security and privacy, but implementation details are crucial.

The above is the detailed content of Top 13 Small Language Models (SLMs) for 2025 - Analytics Vidhya. For more information, please follow other related articles on the PHP Chinese website!

Hot AI Tools

Undress AI Tool

Undress images for free

Undresser.AI Undress

AI-powered app for creating realistic nude photos

AI Clothes Remover

Online AI tool for removing clothes from photos.

Clothoff.io

AI clothes remover

Video Face Swap

Swap faces in any video effortlessly with our completely free AI face swap tool!

Hot Article

Hot Tools

Notepad++7.3.1

Easy-to-use and free code editor

SublimeText3 Chinese version

Chinese version, very easy to use

Zend Studio 13.0.1

Powerful PHP integrated development environment

Dreamweaver CS6

Visual web development tools

SublimeText3 Mac version

God-level code editing software (SublimeText3)

Hot Topics

1793

1793

16

16

1735

1735

56

56

1585

1585

29

29

267

267

587

587

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

AI Investor Stuck At A Standstill? 3 Strategic Paths To Buy, Build, Or Partner With AI Vendors

Jul 02, 2025 am 11:13 AM

Investing is booming, but capital alone isn’t enough. With valuations rising and distinctiveness fading, investors in AI-focused venture funds must make a key decision: Buy, build, or partner to gain an edge? Here’s how to evaluate each option—and pr

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

AGI And AI Superintelligence Are Going To Sharply Hit The Human Ceiling Assumption Barrier

Jul 04, 2025 am 11:10 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). Heading Toward AGI And

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Build Your First LLM Application: A Beginner's Tutorial

Jun 24, 2025 am 10:13 AM

Have you ever tried to build your own Large Language Model (LLM) application? Ever wondered how people are making their own LLM application to increase their productivity? LLM applications have proven to be useful in every aspect

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Kimi K2: The Most Powerful Open-Source Agentic Model

Jul 12, 2025 am 09:16 AM

Remember the flood of open-source Chinese models that disrupted the GenAI industry earlier this year? While DeepSeek took most of the headlines, Kimi K1.5 was one of the prominent names in the list. And the model was quite cool.

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Future Forecasting A Massive Intelligence Explosion On The Path From AI To AGI

Jul 02, 2025 am 11:19 AM

Let’s talk about it. This analysis of an innovative AI breakthrough is part of my ongoing Forbes column coverage on the latest in AI, including identifying and explaining various impactful AI complexities (see the link here). For those readers who h

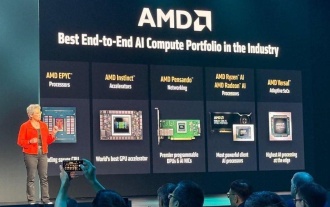

AMD Keeps Building Momentum In AI, With Plenty Of Work Still To Do

Jun 28, 2025 am 11:15 AM

AMD Keeps Building Momentum In AI, With Plenty Of Work Still To Do

Jun 28, 2025 am 11:15 AM

Overall, I think the event was important for showing how AMD is moving the ball down the field for customers and developers. Under Su, AMD’s M.O. is to have clear, ambitious plans and execute against them. Her “say/do” ratio is high. The company does

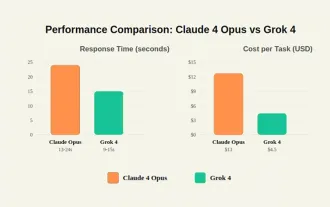

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

Grok 4 vs Claude 4: Which is Better?

Jul 12, 2025 am 09:37 AM

By mid-2025, the AI “arms race” is heating up, and xAI and Anthropic have both released their flagship models, Grok 4 and Claude 4. These two models are at opposite ends of the design philosophy and deployment platform, yet they

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

Chain Of Thought For Reasoning Models Might Not Work Out Long-Term

Jul 02, 2025 am 11:18 AM

For example, if you ask a model a question like: “what does (X) person do at (X) company?” you may see a reasoning chain that looks something like this, assuming the system knows how to retrieve the necessary information:Locating details about the co